TimeDependentExpMPOEvolution

full name: tenpy.algorithms.mpo_evolution.TimeDependentExpMPOEvolution

parent module:

tenpy.algorithms.mpo_evolutiontype: class

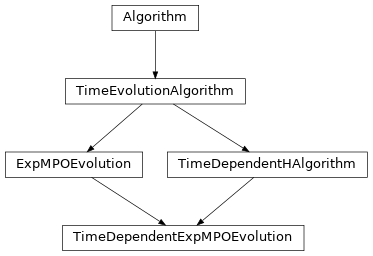

Inheritance Diagram

Methods

|

|

|

Calculate |

Gives an approximate prediction for the required memory usage. |

|

|

Evolve by N_steps*dt. |

|

|

Return necessary data to resume a |

|

Prepare an evolution step. |

|

Re-initialize a new |

|

Resume a run that was interrupted. |

|

Perform a (real-)time evolution of |

|

Run the time evolution for N_steps * dt. |

|

Initialize algorithm from another algorithm instance of a different class. |

Class Attributes and Properties

whether the algorithm supports time-dependent H |

- class tenpy.algorithms.mpo_evolution.TimeDependentExpMPOEvolution(psi, model, options, **kwargs)[source]

Bases:

TimeDependentHAlgorithm,ExpMPOEvolutionVariant of

ExpMPOEvolutionthat can handle time-dependent hamiltonians.See details in

TimeDependentHAlgorithmas well.- calc_U(dt, order=2, approximation='II')[source]

Calculate

self._U_MPO.This function calculates the approximation

U ~= exp(-i dt_ H)withdt_ = dt` for ``order=1, ordt_ = (1 - 1j)/2 dtanddt_ = (1 + 1j)/2 dtfororder=2.

- estimate_RAM(mem_saving_factor=None)[source]

Gives an approximate prediction for the required memory usage.

This calculation is based on the requested bond dimension, the local Hilbert space dimension, the number of sites, and the boundary conditions.

- Parameters:

mem_saving_factor (float) – Represents the amount of RAM saved due to conservation laws. By default, it is ‘None’ and is extracted from the model automatically. However, this is only possible in a few cases and needs to be estimated in most cases. This is due to the fact that it is dependent on the model parameters. If one has a better estimate, one can pass the value directly. This value can be extracted by building the initial state psi (usually by performing DMRG) and then calling

print(psi.get_B(0).sparse_stats())TeNPy will automatically print the fraction of nonzero entries in the first line, for example,6 of 16 entries (=0.375) nonzero. This fraction corresponds to the mem_saving_factor; in our example, it is 0.375.- Returns:

usage – Required RAM in MB.

- Return type:

See also

tenpy.simulations.simulation.estimate_simulation_RAMglobal function calling this.

- evolve(N_steps, dt)[source]

Evolve by N_steps*dt.

Subclasses may override this with a more efficient way of do N_steps update_step.

- Parameters:

Options

- config TimeEvolutionAlgorithm

option summary dt in TimeEvolutionAlgorithm

Minimal time step by which to evolve.

max_N_sites_per_ring (from Algorithm) in Algorithm

Threshold for raising errors on too many sites per ring. Default ``18``. [...]

max_trunc_err in TimeDependentHAlgorithm.evolve

Threshold for raising errors on too large truncation errors. Default ``0.01 [...]

N_steps in TimeEvolutionAlgorithm

Number of time steps `dt` to evolve by in :meth:`run`. [...]

preserve_norm in TimeEvolutionAlgorithm

Whether the state will be normalized to its initial norm after each time st [...]

start_time in TimeEvolutionAlgorithm

Initial value for :attr:`evolved_time`.

trunc_params (from Algorithm) in Algorithm

Truncation parameters as described in :cfg:config:`truncation`.

- option max_trunc_err: float

Threshold for raising errors on too large truncation errors. Default

0.01. Seeconsistency_check(). When the total accumulated truncation error (itseps) exceeds this value, we raise. Can be downgraded to a warning by setting this option toNone.

- Returns:

trunc_err – Sum of truncation errors introduced during evolution.

- Return type:

- get_resume_data(sequential_simulations=False)[source]

Return necessary data to resume a

run()interrupted at a checkpoint.At a

checkpoint, you can savepsi,modelandoptionsalong with the data returned by this function. When the simulation aborts, you can resume it using this saved data with:eng = AlgorithmClass(psi, model, options, resume_data=resume_data) eng.resume_run()

An algorithm which doesn’t support this should override resume_run to raise an Error.

- Parameters:

sequential_simulations (bool) – If True, return only the data for re-initializing a sequential simulation run, where we “adiabatically” follow the evolution of a ground state (for variational algorithms), or do series of quenches (for time evolution algorithms); see

run_seq_simulations().- Returns:

resume_data – Dictionary with necessary data (apart from copies of psi, model, options) that allows to continue the algorithm run from where we are now. It might contain an explicit copy of psi.

- Return type:

- prepare_evolve(dt)[source]

Prepare an evolution step.

This method is used to prepare repeated calls of

evolve()given themodel. For example, it may generate approximations ofU=exp(-i H dt). To avoid overhead, it may cache the result depending on parameters/options; but it should always regenerate it ifforce_prepare_evolveis set.- Parameters:

dt (float) – The time step to be used.

- reinit_model()[source]

Re-initialize a new

modelat currentevolved_time.Skips re-initialization if the

model.options['time']is the same as evolved_time. The model should read out the option'time'and initialize the correspondingH(t).

- resume_run()[source]

Resume a run that was interrupted.

In case we saved an intermediate result at a

checkpoint, this function allows to resume therun()of the algorithm (after re-initialization with the resume_data). Since most algorithms just have a while loop with break conditions, the default behavior implemented here is to just callrun().

- run()[source]

Perform a (real-)time evolution of

psiby N_steps * dt.You probably want to call this in a loop along with measurements. The recommended way to do this is via the

RealTimeEvolution.

- run_evolution(N_steps, dt)[source]

Run the time evolution for N_steps * dt.

Updates the model after each time step dt to account for changing H(t). For parameters see

TimeEvolutionAlgorithm.

- classmethod switch_engine(other_engine, *, options=None, **kwargs)[source]

Initialize algorithm from another algorithm instance of a different class.

You can initialize one engine from another, not too different subclasses. Internally, this function calls

get_resume_data()to extract data from the other_engine and then initializes the new class.Note that it transfers the data without making copies in most case; even the options! Thus, when you call run() on one of the two algorithm instances, it will modify the state, environment, etc. in the other. We recommend to make the switch as

engine = OtherSubClass.switch_engine(engine)directly replacing the reference.- Parameters:

cls (class) – Subclass of

Algorithmto be initialized.other_engine (

Algorithm) – The engine from which data should be transferred. Another, but not too different algorithm subclass-class; e.g. you can switch from theTwoSiteDMRGEngineto theOneSiteDMRGEngine.options (None | dict-like) – If not None, these options are used for the new initialization. If None, take the options from the other_engine.

**kwargs – Further keyword arguments for class initialization. If not defined, resume_data is collected with

get_resume_data().

- time_dependent_H = True

whether the algorithm supports time-dependent H